GANPOP: Generative Adversarial Network Prediction of Optical Properties from Single Snapshot Wide-field Images

Chen MT, Mahmood F, Sweer JA, Durr NJ

IEEE Transactions on Medical Imaging 39:6 2020

[Journal Link] [Preprint]

Abstract

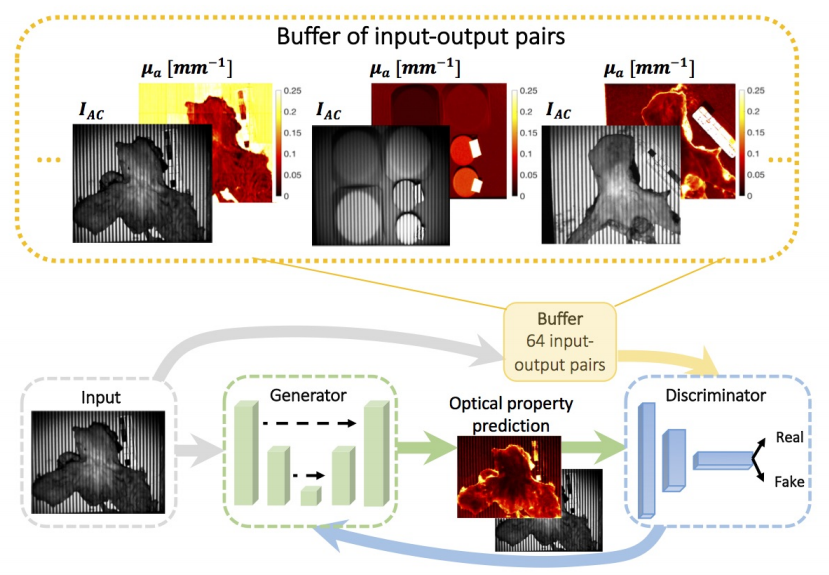

We present a deep learning framework for wide-field, content-aware estimation of absorption and scattering coefficients of tissues, called Generative Adversarial Network Prediction of Optical Properties (GANPOP). Spatial frequency domain imaging is used to obtain ground-truth optical properties at 660 nm from in vivo human hands and feet, freshly resected human esophagectomy samples, and homogeneous tissue phantoms. Images of objects with either flat-field or structured illumination are paired with registered optical property maps and are used to train conditional generative adversarial networks that estimate optical properties from a single input image. We benchmark this approach by comparing GANPOP to a single-snapshot optical property (SSOP) technique, using a normalized mean absolute error (NMAE) metric. In human gastrointestinal specimens, GANPOP with a single structured-light input image estimates the reduced scattering and absorption coefficients with 60% higher accuracy than SSOP while GANPOP with a single flat-field illumination image achieves similar accuracy to SSOP. When applied to both in vivo and ex vivo swine tissues, a GANPOP model trained solely on structured-illumination images of human specimens and phantoms estimates optical properties with approximately 46% improvement over SSOP, indicating adaptability to new, unseen tissue types. Given a training set that appropriately spans the target domain, GANPOP has the potential to enable rapid and accurate wide-field measurements of optical properties.